Carme Puche Moré (Barcelona, 1977)

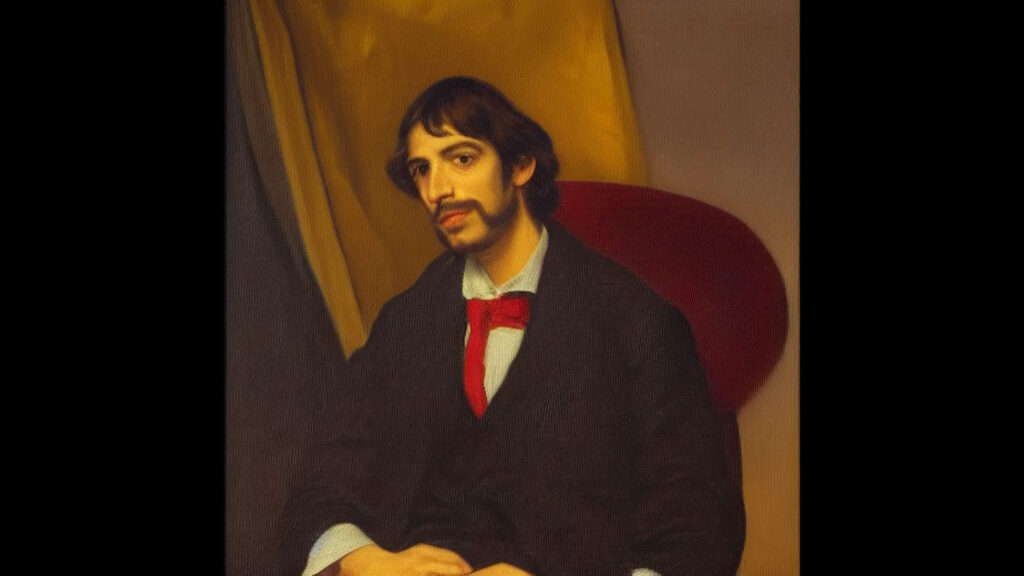

My Word, 2023. Video.

https://www.carmepuche.com/my-word

My Word is an audiovisual project that uses latent diffusion models (LDM) to highlight the ideological biases of artificial intelligence. Throughout the video, a voice introduces prompts that define identities and professions, while we observe how AI responds with images modelled on patriarchal and colonial imaginaries. This is what happens in the sequence to which this image belongs. The prompt is: I am a doctor. Not that one. I am a female doctor. Not a white female doctor. A black one. Although the text incorporates successive clarifications, the AI initially produces portraits of white men, possibly European.

Contextualization

Digital racism is a contemporary form of racism that manifests, reproduces and amplifies itself through digital technologies. A critical approach to the phenomenon involves understanding that it is not only about racist discourse on social media, but also about a broader structural logic that explains how digital systems – algorithms, databases, artificial intelligence, platforms, among others – actively participate in the production, reproduction, and management of racial inequalities based on dominant social, economic, and political interests.

Various researchers and activists who analyse and combat digital racism (Benjamin, 2019; Phan and Wark, 2021; Eubanks, 2018; Noble, 2018) point out that it is particularly difficult to identify the inequalities generated by new technologies, as these are often presented as more ‘objective’, ‘neutral’ or even ‘progressive’ than traditional forms of social control and management.

The supposed neutrality of technology is a fallacy. By not operating through explicit racial distinctions, algorithms appear immune to bias. However, it is precisely this appearance of neutrality that renders the racial biases they incorporate invisible, making them more difficult to detect and, therefore, more dangerous. Algorithms do not start from scratch: their operation depends on large volumes of data that reflect a history of inequality, exclusion and discrimination. In this way, technology not only reproduces pre-existing structures of oppression, but also consolidates them and makes them less transparent.

The development of science and technology has historically been conditioned by power structures that have perpetuated forms of oppression based on race, class and gender. Consequently, contemporary technologies, including artificial intelligence, are not neutral: they replicate and, in many cases, subtly intensify these systemic inequalities.

From this perspective, various critical voices warn that tools such as artificial intelligence, Big Data and so-called ‘smart’ technologies are contributing to the automation of racial discrimination. This implies that racial bias is integrated into the very logic of how technological systems operate, allowing racism not only to persist, but to adapt, become more efficient and operate in an increasingly silent manner.

Finally, a look at technological infrastructures – from their material foundations to their ideological logics – allows us to understand that race and racism are not external or accidental elements, but rather constitutive of their very existence. From the natural resources extracted from the Global South for the manufacture of hardware, through the energy and cabling systems that support data centres, to the exploited labour and data used to train algorithms, the entire technical fabric of digital capitalism is permeated by racial power relations. Technology, therefore, is not neutral: at its most basic level, it incorporates a history of extraction, dispossession, and racialised hierarchisation.

Digital racism is thus not a ‘flaw’ in the system, but one of the ways in which it operates to sustain and reproduce racial hierarchies in a world increasingly mediated by digital technologies. Thinking about racial justice in the digital age therefore implies questioning not only the uses of technology, but also its design, infrastructure, forms of ownership and political logic.

Examples

Algorithmic racism

Algorithmic systems trained with historical data – data that is already racialised – tend to reproduce and reinforce pre-existing inequalities. A prime example is facial recognition systems, which have significantly higher error rates when applied to racially diverse people. However, these technologies are often presented as ‘universal’, as if they work the same way for everyone.

In reality, many of these systems have been designed with an implicit reference to a specific profile that conforms to the dominant social norm. Those who do not fit this norm – because of their skin colour, dress, accent, or any other socially marked trait – are more likely to experience failures, exclusions, or negative consequences when interacting with these systems.

A clear example of this is police prediction algorithms, which over-represent Black or migrant communities, as well as automated credit granting or personnel selection models, which systematically penalise historically racialised groups.

Furthermore, in many cases, data on people and communities in the Global South is extracted without consent to train technologies developed by companies in the Global North, perpetuating colonial relationships in new forms. This phenomenon has been conceptualised as data colonialism (Couldry and Mejías, 2019; Mejías and Couldry, 2019; Dicarlo and Moncada Niño, 2024).

Governance of populations through datafication

Datafication refers to the process by which aspects of social reality are converted into quantifiable and codifiable data that can be processed using technological tools. This process is preceded by the massive accumulation of data, known as Big Data. Datafication is based on the premise that a large amount of data, correctly selected, organised and processed using algorithmic formulas, can offer objective solutions to complex problems of our time, from biomedical issues to social conflicts.

However, in contexts such as border control, security, health, or social services, the use of digital technologies enables new forms of classification, surveillance, and exclusion that disproportionately affect migrants and racially diverse populations. These dynamics are linked to what some authors have conceptualised as ‘automated state racism’, in which discriminatory decisions are incorporated and executed through seemingly neutral technological systems.

Digital platforms and the circulation of racism

Social media and digital platforms facilitate the circulation and virality of racist discourse, often amplified by algorithmic logic that prioritises conflict, polarisation and sensationalism as drivers of interaction. At the same time, content moderation mechanisms tend to penalise anti-racist activists and discourse more often than those who spread hate messages, thus reproducing unequal power relations in the digital space.

Activities

Activity 1: Map of racist technology

Objective

To understand how racism permeates all phases of a technology, from its manufacture to its use.

Start

Briefly introduce a technological object used in everyday life (e.g. a mobile phone or a camera with artificial intelligence) and ask the group the following questions:

- What do we know about how this object is manufactured?

- What materials are used and where do they come from?

- Who is involved in its assembly?

- Who benefits from and who is harmed by its production and use?

Main exercise

In groups, construct a spiral or chain-shaped map representing the different stages in the life cycle of the technological object being analysed. For each stage, consider the following questions:

- Extraction of minerals and raw materials.

- Manufacturing and assembly locations.

- Who designs and programmes it (software and algorithms).

- What types of data it uses and where they come from.

- Where, how and for whom it is mainly marketed and where, how and by whom it is consumed.

Sharing and guided discussion

Based on the work done, a group discussion is held on the following questions:

- Which dimensions of digital racism are most invisible?

- How can these technologies be used for control, surveillance or exclusion?

- At each stage of the life cycle, which forms of racism – explicit or structural – can be identified?

- Which strategies can be devised to detect, challenge or resist these dynamics?

Final product

As a result of the work, a collective mural can be created in which the different objects analysed by the groups intersect. This mural can show international routes, connections between territories and power relations involved in the global cycle of technologies.

Activity 2: ‘The algorithm that sees me’

Objective

To reflect on how digital systems – algorithms, networks, and platforms – classify and treat people differently.

Start

The following questions are posed to the group:

- Which social networks do you use regularly?

- What type of content appears most frequently in your feeds?

- Is there uniformity in the content that users are exposed to, or are there significant differences?

Main exercise

Small groups are formed. Each group creates a fictional character with a specific identity, with varying aspects, such as origin, race, gender, age, sexual orientation, religion, social and political interests, or body types.

Next, the group imagines how this person would be ‘read’ or classified by different algorithmic systems:

- What kind of content would platforms such as Instagram or TikTok recommend to them?

- Which advertisements would they be most likely to receive?

- How would they be identified by a facial recognition system?

- Could they encounter obstacles in automated hiring or selection processes?

Sharing

Each group shares its case and a collective discussion is opened around the following questions:

- Who is most visible and who is most monitored in the digital environment?

- What roles, expectations or limitations do algorithms impose on different bodies and identities?

Final product

To conclude the activity, participants are asked to create a poster, infographic or mind map summarising the main conclusions of the exercise.

Resources

Readings and articles

- Marín Cisneros, A. El algoritmo de la raza: Notas sobre antirracismo y Big Data. https://cajanegraeditora.com.ar/el-algoritmo-de-la-raza-notas-sobre-antirracismo-y-big-data/ (Avaliable in Spanish)

- Una introducción a la IA y la discriminación algorítmica para movimientos sociales. (2022). Algorace https://www.algorace.org/2022/11/26/una-introduccion-a-la-ia-y-la-discriminacion-algoritmica-para-movimientos-sociales/ (Avaliable in Spanish)

- Phan, T. [Thao]. Entrevista: «Avui, l’algoritme és clau per concedir o no una petició d’asil». https://directa.cat/avui-lalgoritme-es-clau-per-concedir-o-no-una-peticio-dasil/ (Avaliable in Catalan)

Audiovisual material

-

Racisme Digital: Conversa amb Ruha Benjamin. Fundació Bofill. https://fundaciobofill.cat/actes/racisme-digital-conversa-amb-ruha-benjamin (Avaliable in Catalan)

-

The Coded Gaze: Bias in Artificial Intelligence. (n. d.). Conference at the Equality Summit, with Joy Buolamwini. https://www.youtube.com/watch?v=eRUEVYndh9c

References

Benjamin, R. [Ruha]. (2019). Race after technology: Abolitionist tools for the new Jim Code. Polity Press.

Browne, S. [Simone]. (2015). Dark matters: On the surveillance of Blackness. Duke University Press.

Couldry, N. [Nick] and Mejías, U. A. [Ulises Ali]. (2019). The costs of connection: How data is colonizing human life and appropriating it for capitalism. Stanford University Press.

Dicarlo, R. [Roberto] and Moncada Niño, Á. [Álvaro]. (2024). Herencia del pasado, desafíos del presente: Colonialismo de datos contemporáneo. Anales de Investigación en Arquitectura, 14(1). https://doi.org/10.18861/ania.2024.14.1

Eubanks, V. [Virginia]. (2018). Automating inequality: How high-tech tools profile, police, and punish the poor. St. Martin’s Press.

Mejías, U. A. [Ulises Ali] and Couldry, N. [Nick]. (2019). Colonialismo de datos: Repensando la relación de los datos masivos con el sujeto contemporáneo. Virtualis: Revista de Cultura Digital, 10(18), 78–97. https://www.revistavirtualis.mx/index.php/virtualis/article/view/289

Noble, S. U. [Safiya Umoja]. (2018). Algorithms of oppression: How search engines reinforce racism. NYU Press.

Phan, T. [Thao] and Wark, S. [Scott]. (2021). Racial formations as data formations. Big Data & Society, 8(1), 1–5. https://research.monash.edu/en/publications/racial-formations-as-data-formations/